Recently, Aberdeen Group published a report titled Preventing Virtual Application Downtime – which was written by senior research analyst Jim Rapoza. The report claims that today 59% of applications – including those that are mission critical – are virtualized and Aberdeen believes that it is completely reasonable that in the near future this percentage will increase to 80% or more.

The report discusses the fact that application downtime is incredibly expensive – $686,250 is the average cost of an hour of downtime for large companies. For medium size companies the hourly cost is about $216,000 and for small companies it is about $8500. To attempt to avoid these costs, leading organizations implemented high-availability and fault-tolerant related systems and processes to prevent application downtime. Aberdeen suggests that “Best-in-Class” organizations pair high-availability software and fault-tolerant systems with processes that lead to a heightened ability to monitor and understand virtual applications and to detect and address potential issues.

Aberdeen found that 80% of all businesses are utilizing high-availability software and 56% were leveraging fault-tolerant servers. So, it appears that many businesses are employing best practices to attain high availability levels to keep their critical virtual applications running and to avoid the high costs of downtime.

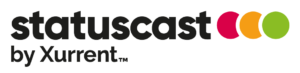

Here’s the odd thing; according to Aberdeen’s research, the industry average for measuring performance of virtualized devices is only 46%. It’s the same percentage for measuring end-user satisfaction and only 20% measure ROI for implementing virtualization. So in the words of Jim Rapoza “if you don’t measure the performance of something, how can you ensure that it has high availability and no downtime?”

The thing is that although many organizations do things to prevent application downtime – they don’t do things optimally. Even those that are “Best-in-Class” organizations still can’t claim 100% uptime. Yes, you need to do your best to prevent downtime – but you will – at some point – go down.